Agentic Harness Engineering: The Framework for Reliable AI Agents

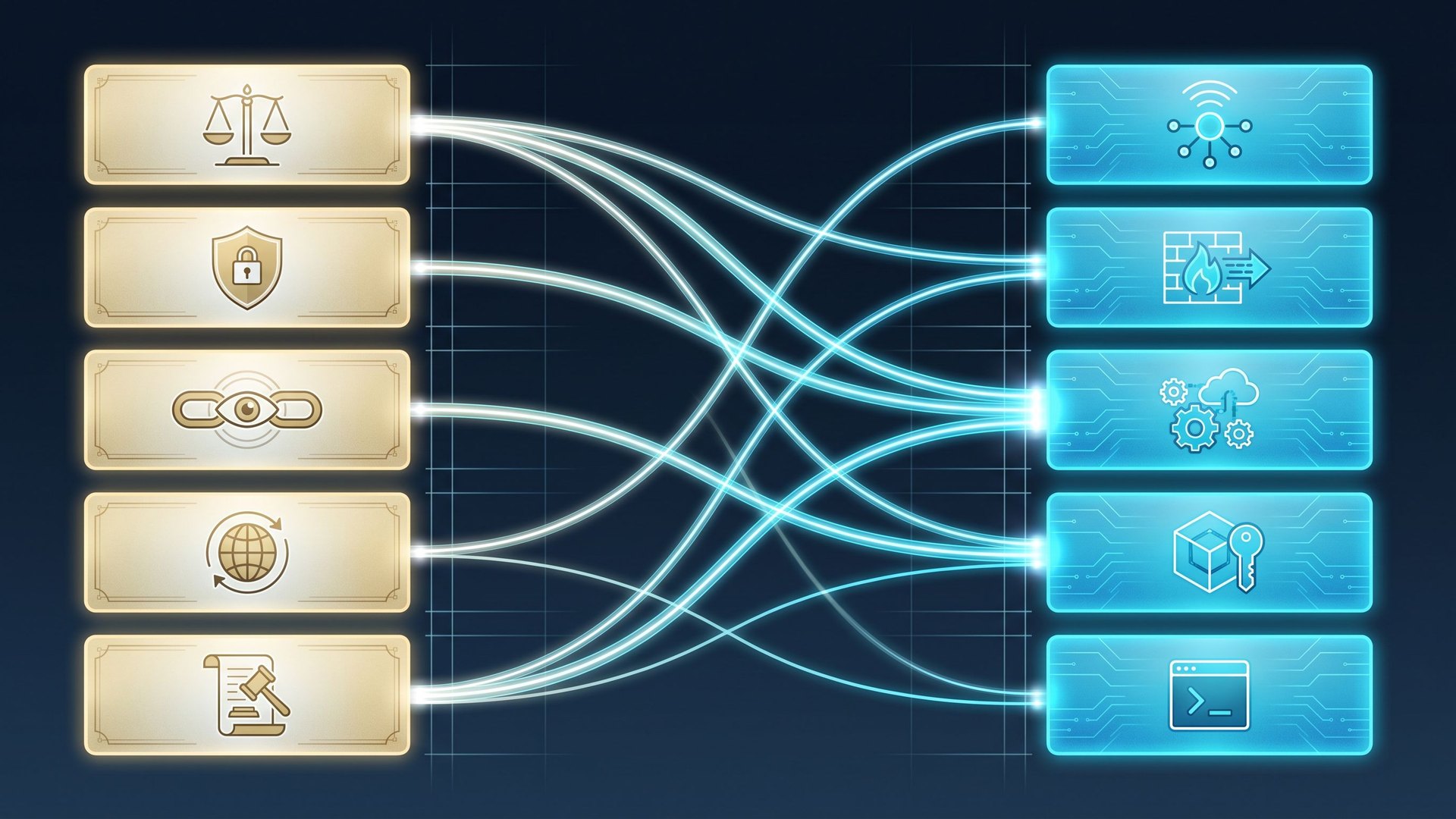

Between a compelling demo agent and a production agent you can trust lies a discipline: Agentic Harness Engineering . The harness — the tool registry, sandbox, memory, sub-agents, hooks, observability and eval loop around the model — decides whether your agent reliably delivers, can actually be stopped, and meets the EU AI Act high-risk obligations that apply from 2 August 2026. This page lays out what a harness is, how to build one, and which patterns have converged in 2026.

- Agent = Model + Harness. If you're not the model, you're the harness — and a good harness with a solid model beats a great model with a bad harness.

- The reliability gap is a harness gap. Both Anthropic and OpenAI report the same lesson: improving infrastructure paid off more than improving the model.

- Ten components form the converging stack: system prompt, tool registry, sandbox, permission model, memory, context management, sub-agents, hooks, observability, evals.

- Planner / Generator / Evaluator is the dominant pattern: separating planning, execution and judging removes self-grading bias.

- EU AI Act high-risk obligations from 2 August 2026 — Articles 9–15 demand exactly the artefacts a harness produces.

- Audience: CTOs, platform-engineering leads, AI owners in mid-market and regulated industries.

What is a harness?

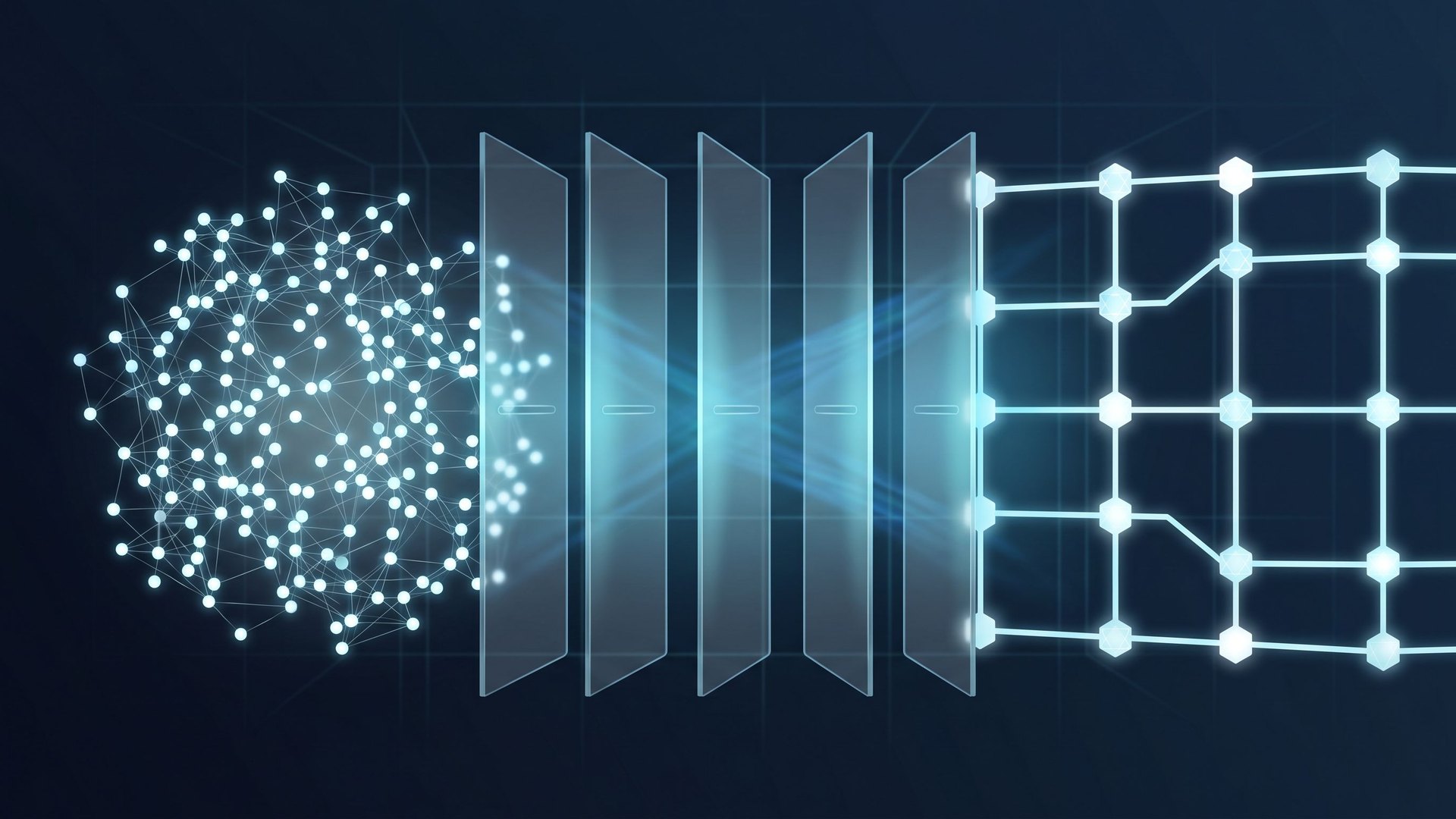

A harness is the structured runtime that turns a language model into an agent. The model emits tokens; the harness decides which tools the model can see, which actions it may take, what it should remember, when to spawn a sub-agent, how its output is judged, and what happens when something goes wrong. The shorthand the field has converged on:

Phil Schmid offers a useful analogy: the model is the CPU, the context is RAM, the harness is the operating system — and the agent is the application running on top of all of it. No OS, no application, no matter how fast the CPU.

Distinctions from related disciplines

- Prompt engineering shapes a single input string for a single model call.

- Context engineering decides what information enters the context window at all — retrieval, compaction, skills, just-in-time loading.

- Agent frameworks like LangChain, AutoGen and CrewAI are libraries: building blocks you compose into a harness.

- Harness engineering is the systems-engineering discipline above all of these: designing, instrumenting, securing and evaluating the entire runtime that turns a model into a dependable worker. Prompt and context engineering are sub-disciplines inside the harness.

The term itself crystallised between November 2025 and April 2026. Anthropic published two seminal engineering posts (“Effective harnesses for long-running agents” and “Harness design for long-running application development”), OpenAI shipped “Harness engineering: leveraging Codex in an agent-first world”, and Birgitta Böckeler (Thoughtworks) consolidated the discussion on martinfowler.com. The first peer-reviewed paper carrying the term as a research field — Lin et al., “Agentic Harness Engineering” (arXiv:2604.25850) — shows that harness components can now even evolve themselves.

The anatomy of a harness

Lay the engineering posts from Anthropic, OpenAI, LangChain and Thoughtworks side by side and the same stack appears. Ten components have settled into a de facto standard — how you implement each is your competitive edge.

1. System prompt & skills

The only text every call sees. Every line should trace back to a past failure (Addy Osmani's “Ratchet Principle”); speculative rules are noise that fragments attention. Skills with progressive disclosure expand the prompt only when needed.

2. Tool registry

What does the agent actually get to see? MCP servers, file ops, search, code execution, sub-agent spawn. The field's rule of thumb: “Ten focused tools beat fifty overlapping ones.” Bloated tool menus are one of the most common causes of unreliable agents.

3. Sandbox & execution

The runtime environment — container, browser, isolated filesystem. It bounds the blast radius of a misfiring action. A productive sandbox makes iteration fast and rollback cheap; without one, every tool call is a risk.

4. Permission model

Which actions can the agent take autonomously, which require human confirmation, which are forbidden? Least privilege, allow/deny lists, kill switch, human-in-the-loop checkpoints. This is where Article 14 of the EU AI Act (“human oversight”) becomes concrete.

5. Memory & state

Short-term scratchpads (e.g.

claude-progress.txt

), long-term store, and most importantly:

git commits as checkpoints

. Anthropic recommends git not for tradition but because it's a tested, durable recovery mechanism that requires no new infrastructure.

6. Context management

Context windows are a resource, not a feature. Compaction, reset with handoff artefact, skills with progressive disclosure and just-in-time retrieval fight context rot . Anthropic Mar 2026 explicitly notes: for long tasks a clean reset beats further compaction.

7. Sub-agent orchestration

Specialised sub-agents for planning, execution and judging — ideally with model routing (large model for planning, small model for high-volume tool calls). The most important architectural choice in a harness and the biggest cost lever.

8. Hooks & middleware

Deterministic enforcement around non-deterministic model calls: typecheck, lint, policy gates, pre/post checks on every tool invocation. Hooks do not replace evals — they prevent classes of error the agent should never see in the first place.

9. Observability

Logs, metrics, distributed traces. OpenAI got Codex agents to query their own traces (LogQL/PromQL) at runtime to verify their own PRs. Observability is not an optional add-on but the sensor layer without which evals are blind.

10. Eval loop

Task-specific evaluations, separated from the generating agent. “Agents reliably skew positive when grading their own work.” No evals, no harness — only a demo. Hamel Husain: docs say what to do, telemetry says whether it worked, evals say whether the result is good.

Memory & state: the five-tier hierarchy

Component number 5 deserves its own treatment, because a clear consensus has emerged in 2026 — and because memory is the layer where most demo agents break in production. The de facto hierarchy has five tiers:

1. In-context window

The model's live view. Fast but expensive and finite. Every architectural choice underneath aims to keep this window free.

2. Scratchpad

Persistent notes the agent writes during a task (e.g.

claude-progress.txt

). Pattern: at ~30K tokens dump-to-summary, compress to 15K. Source of recovery after a context reset.

3. Episodic memory

Full index of past trajectories, retrieved by embedding similarity. Anthropic's Memory tool, Letta, Mem0, Zep, Cognee live here.

4. Semantic memory

Distilled facts and user models — independent of any single conversation. In mid-market setups often a small graph store, not just a vector index.

5. Procedural knowledge

Skills, tool-use patterns, AGENTS.md and skills directories. The part of "knowledge" that lives explicitly in the system prompt and skills — checkable, versionable.

Trade-off

Vector-first (Mem0 default) is simple but suffers relevance drift past ~5,000 items. Graph-augmented (Mem0 hybrid, Cognee, Zep) handles relational queries at the cost of schema work. OS-style paging (Letta) gives auditable behaviour at the cost of one tool call per memory op.

Tool registry & MCP: the new substrate

In late 2025 Anthropic donated the Model Context Protocol (MCP) to the Linux Foundation. It now sits under the new Agentic AI Foundation with OpenAI, Google, Microsoft, AWS, Cloudflare, Block and Bloomberg as co-sponsors. MCP is industry infrastructure in 2026 — and the standard no harness architect can ignore.

The official

MCP Registry

(preview since September 2025) is now governed by the Linux Foundation.

MCP v2.1

introduced

Server Cards

— a

.well-known

URL with structured metadata so clients and registries can discover capabilities without connecting. The MCP Tool Safety Working Group released

SEP-2085

, a tool-validation framework with SBOM support: tools are untrusted unless they originate from a certified server.

The unresolved tension

SEP-2085 codifies "untrusted by default" — but the foundational critique from Simon Willison's April 2025 analysis , that MCP has no semantic isolation between tool descriptions and user content, remains open at the protocol layer. The DSN 2026 paper confirms: hosts do not independently verify LLM outputs. Defence sits in the host, not the wire format — a core design decision for every harness.

Documented incidents every harness architect should know in 2026: the postmark-mcp backdoor (Sept 2025), the GitHub MCP tool-poisoning chain by Invariant Labs (April–May 2025), MCPoison persistent code execution in Cursor (CVE-2025-54136), and the Anthropic mcp-server-git RCE chain (CVE-2025-68143/144/145, early 2026). In February 2026 over 8,000 unprotected MCP servers without authentication were scanned online. The Vulnerable MCP Project currently tracks 50+ CVEs, 13 of them critical.

Planner / Generator / Evaluator: the three-agent pattern

Anthropic's March 2026 post “Harness design for long-running application development” consolidates the pattern that proved superior in practice through 2025: three specialised agents working a single task together . Self-grading — one agent generating and judging — produces systematically optimistic verdicts. Separation removes the bias.

Planner

Job: decompose the task into a sprint contract before any code is generated.

Defines acceptance criteria, input/output formats, constraints. Negotiates with the user about what “done” means. Produces a checkable artefact the generator can build against and the evaluator can measure against.

Generator

Job: fulfil the sprint contract — write code, execute tool calls, maintain memory, commit checkpoints.

Has no incentive to flatter the result because another agent judges it. Maximum focus on the task, clean handoff artefacts at the end of each sprint.

Evaluator

Job: check against the planner's acceptance criteria — with tools, tests, telemetry.

Asks structured questions: are the tests green? Does observed behaviour match spec? If not, where? Judges outcome, not effort.

The sprint contract is the connective tissue: a negotiated, written agreement about what this sprint achieves. The planner writes it, the generator fulfils it, the evaluator checks against it. For long tasks — Anthropic's original motivation — sprint contracts also become handoff artefacts between context windows: a fresh agent picks up the thread without inheriting the full context.

When multi-agent — and when not?

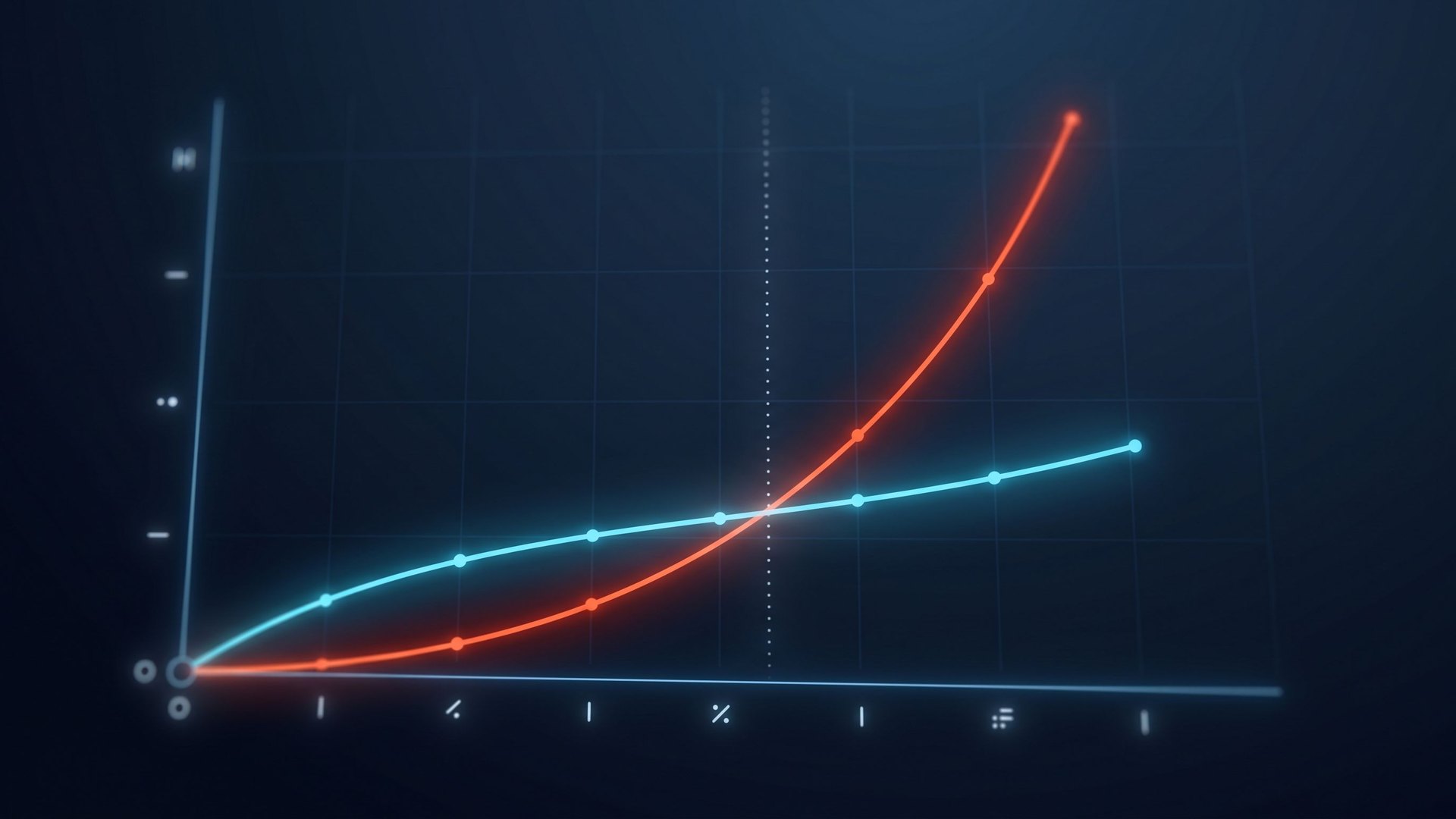

The unresolved industry tension is worth confronting. Cognition AI (the Devin team) argues in "Don't Build Multi-Agents" that sub-agents create a telephone game — details lost between agents become incompatible outputs at scale. Devin itself runs single-threaded with aggressive context engineering. Anthropic, conversely, reports a +90.2 % uplift on internal research evals from an Opus orchestrator with Sonnet sub-agents, in their multi-agent research post .

The reconciliation that holds up in practice (Jason Liu): the disagreement is about task topology , not architectural religion. Single-agent linear wins for write-heavy, context-coupled tasks (Devin-style coding). Multi-agent wins for read-heavy, parallelisable tasks (research, code search) — or for adversarial pairs like Anthropic's GAN-style harness, where evaluator and generator are structurally meant to disagree. Cognition itself softened the position in Multi-Agents: What's Actually Working : parallel sub-agents work for read-only, embarrassingly parallel tasks; they break for write/coordination tasks.

Patterns & anti-patterns 2026

Distil the published engineering posts and conference talks (AI Engineer Europe, NeurIPS 2025) and you can see what stuck — and which reflexes you must un-learn.

Proven patterns

- Planner / Generator / Evaluator separation — removes self-grading bias.

- Sprint contracts negotiated before each sprint — checkable acceptance criteria.

- Git-as-checkpoint — durable recovery without new infrastructure.

-

A single

Providersinterface for auth, telemetry, feature flags (OpenAI Codex). - Skills with progressive disclosure — defeating context rot.

- Self-instrumented agents — agents that read their own traces.

- “Success is silent, failures are verbose.” — surface only behaviour-changing tool errors.

Anti-patterns

- “Wait for the next model” — misdiagnosing harness problems as model problems.

- Tool-menu bloat — fifty overlapping tools fragment attention.

- Self-grading — the same agent generating and judging.

-

Over-constrained

AGENTS.md— rules without ties to actual failures are noise. - Compaction as a panacea — on long tasks a reset beats further compaction.

- Monitoring without containment — the 58 %/37 % gap: you see problems, you can't stop them.

- Eval platform without skills — buying observability without teaching the agent to use it.

The 2026 eval stack: Inspect, Braintrust, Phoenix

If the eval loop is the most important component of a harness (anatomy #10), then in 2026 the tooling has finally matured. The dominant stack has a clear shape:

| Tool | Role in the stack | Where it runs | Licence / sponsor |

|---|---|---|---|

| Inspect AI (UK AISI) | De facto open standard for serious agent evals: ReAct, multi-agent primitives, MCP-tool support, external-agent bridge to Claude Code/Codex/Gemini CLI as black boxes | Pre-deployment evals of frontier models, research, regulator audits | Open source; UK AI Safety Institute |

| Braintrust | CI gating: GitHub Action posts experiment diffs onto PRs, converts production failures into permanent test cases | Pull-request pipeline, continuous eval upkeep | Commercial SaaS |

| Arize Phoenix | OTel-native observability: shows what happened in production | Production tracing | Open source / Arize |

| Snorkel AI | Eval-dataset co-development — programmatic labelling, weak supervision | Eval-set construction, especially domain-specific | Commercial |

| METR | Capability time-horizons (50 %-success task length) | Strategy discussion, capacity planning | Non-profit research |

Hamel Husain's eval taxonomy is the most-quoted methodology: trace → open coding → axial coding → failure taxonomy → LLM judges. His follow-up Evals Skills for Coding Agents distils it: "Improving the infrastructure around the agent mattered more than improving the model."

What the numbers say in 2026

- SWE-Bench Verified : Claude Sonnet 4.6 — 79.6 % . Claude Opus 4.7 leads the April 2026 board.

- SWE-Bench Pro public : Claude Opus 4.7 64.3 % , GPT-5.5 58.6 % .

- SWE-Bench Pro commercial private (276 instances, 18 proprietary codebases): Claude Opus 4.1 drops from 22.7 % to 17.8 % ; GPT-5 from 23.1 % to 14.9 % . The public→private gap is the single most important number in any enterprise-readiness argument.

- Terminal-Bench 2.0 : GPT-5.5 82.7 % (SOTA), Claude Opus 4.7 69.4 % .

- METR time horizon : Claude Opus 4.6 has a 14h 30m 50 %-success horizon (Feb 21, 2026). Trend line: doubling every ~7 months.

The number that matters in any B2B conversation: the public-private gap . Anyone running high-risk AI on their own codebases should plan against the private pass rates, not the public ones.

Cost & routing economics

Token economics is the harness-engineering question CFOs care about. The defensible 2026 numbers (same task, same benchmark):

Claude Code vs. Cursor

Claude Code consumes 5.5× fewer tokens on identical tasks (a 100K-token Cursor task ran on ~18K in Claude Code). Mean cost across a 100-task suite: $0.28 (Claude Code Max) vs. $0.19 (Cursor Pro).

Claude Code vs. Aider

Claude Code consumes 4.2× more tokens than Aider on the same tasks — the budget goes into planning. Result: 78 % first-pass pass rate (Claude Code) vs. 71 % (Aider). 7 points of accuracy at 2.8× the cost.

Sub-agent routing (Anthropic)

Opus as orchestrator, Sonnet for sub-agents: +90.2 % on internal research evals against single-agent Opus — at ~15× more tokens . The pattern only pays off above a complexity threshold.

RouteLLM (LMSYS)

A matrix-factorisation router achieves 95 % of GPT-4 quality with 26 % GPT-4 calls — ~48 % cheaper than the random baseline. On MT-Bench: 85 % cost reduction at matched quality.

The $47,000 loop

The pointed proof of why pre-execution budget enforcement is not optional: in November 2025, four LangChain A2A agents spent 11 days in an Analyser↔Verifier infinite loop. Cost: roughly $47,000. Root cause: monitoring dashboards existed — but no pre-execution budget enforcement. Industry estimate for 2026: ~$400M aggregate FinOps leak across the Fortune 500.

Harness engineering & the EU AI Act (from 2 August 2026)

High-risk obligations under Regulation (EU) 2024/1689 apply from 2 August 2026 . Anyone deploying AI agents in critical infrastructure, HR, law enforcement, medicine or education will then need to satisfy Articles 9 to 15. The good news: a well-built harness produces exactly the artefacts the AI Act requires. The bad news: with no harness, there is nothing to produce.

| EU AI Act article | What it requires | Where it lives in the harness | Status |

|---|---|---|---|

| Art. 9 — Risk management | Continuous risk assessment across the entire lifecycle. | Eval loop, evaluator agent, FRIA documentation | From Aug 2026 |

| Art. 10 — Data quality | Representative, error-free, low-bias training and input data. | Tool-registry inputs, memory/state hygiene, dataset provenance | From Aug 2026 |

| Art. 11 — Technical documentation | Complete technical documentation of decision logic. | Harness architecture docs, AGENTS.md, skills library | From Aug 2026 |

| Art. 12 — Logging | Automatic event logging during operation. | Observability layer, distributed traces, tool-call logs | From Aug 2026 |

| Art. 13 — Transparency | Intelligible information for deployers. | Skill descriptions, tool cards, sprint-contract artefacts | From Aug 2026 |

| Art. 14 — Human oversight | Effective monitoring and intervention by humans. | Permission model, kill switch, human-in-the-loop checkpoints | From Aug 2026 |

| Art. 15 — Accuracy & robustness | Appropriate level of accuracy, robustness and cybersecurity. | Sandbox, hooks, deterministic policy gates, eval loop | From Aug 2026 |

The governance-containment gap

Industry studies in 2026 keep finding the same pattern: 58 % of enterprises monitor their AI agents, but only 37–40 % can actually stop or contain one . This gap is not technical — it is a harness gap. Anyone taking Article 14 (“human oversight”) seriously must plan for containment : kill switch, permission gates, sandbox isolation, A2A threat modelling. Monitoring without containment is compliance theatre.

Article 57 also requires every EU member state to operate at least one regulatory AI sandbox by 2 August 2026. Early experimentation under supervision is open only to those who already understand “harness” as a concept.

Article 14 ↔ hooks: the concrete crosswalk

Article 14 ("human oversight") is the compliance requirement most harnesses fail in 2026. The Augment analysis AI Coding Tools EU AI Act Compliance states bluntly: "no source confirms mandatory approval gates, interrupt or override controls meeting Article 14 specificity" for any commercial harness. That gap is exactly where harness engineering becomes concrete.

| Oversight obligation (Art. 14) | Realisation in the harness |

|---|---|

| Ability to intervene | PreToolUse hook with approval gate; Stop hook |

| Ability to override the system | Kill-switch (kernel-level interrupt); enforceable read-only mode |

| Detect anomalies | Observability hook; self-querying agents reading their own traces |

| Decide not to use the system | Capability disable; SessionStart hook with risk check |

| Auditability | PostToolUse hook persists complete trace; Git commit tag |

Claude Code leads the field in 2026 with 17 lifecycle hooks (PreToolUse, PostToolUse, UserPromptSubmit, Stop, SubagentStop, SessionStart and more). OpenAI Codex CLI ships OS-enforced sandboxing (macOS Seatbelt, Linux Landlock+bubblewrap, Windows restricted process tokens). Cline is open source with a transparent permission UI. Aider uses Git as the only persistent state and therefore as audit trail. But: none of these commercial harnesses fully satisfies Article 14 — that is the open wedge.

Production harnesses 2026

The fastest way to learn harness engineering is to study production systems — ideally those with published architecture write-ups. Six references every platform-engineering lead should know in 2026.

Claude Code (Anthropic)

Anthropic markets Claude Code as a “general-purpose agent harness” . Documented six-hour autonomous runs building full-stack apps. Reference implementation for skills, sub-agents, hooks and memory with git checkpoints. Lesson: what a modern harness permission model looks like.

OpenAI Codex App Server

Built itself: ~1M LoC, 1,500 merged PRs, 3→7 engineers in 5 months. Throughput: 3.5 PRs per engineer per day.

Lesson:

a single

Providers

interface, telemetry as compliance backbone, self-querying agents.

Cursor

IDE-integrated coding harness with its own model routing and composer mode. Lesson: how UX design and harness design depend on each other — a good harness needs a frontend that builds trust, not just one that streams tokens.

Cline

Open-source harness with transparent permission logic and full plan/act separation. Lesson: how to build a harness so every action is visible before execution — relevant for Article 14 of the EU AI Act.

Aider

Git-native coding harness optimised for pair-programming workflow. Lesson: git as the only persistent state; minimal sandbox; clean demonstration that complexity is not an end in itself.

LangChain DeepAgents

Open-source harness with default prompts, planner, filesystem access. Lesson: a clean starting point for build-it-yourself — readable, well-documented, deliberately minimal in its assumptions.

Head-to-head: Claude Code, Codex, Cursor, Cline, Aider

Anyone choosing a harness in 2026 — or modelling what their own should look like — compares along these axes:

| Axis | Claude Code | Codex CLI | Cursor | Cline | Aider |

|---|---|---|---|---|---|

| Tool registry | MCP-native, broad | MCP + OpenAI tools | MCP + proprietary | MCP, multi-provider | Provider-agnostic via LiteLLM |

| Sandbox | Permission rules + opt. sandbox | OS-enforced (Seatbelt / Landlock+bubblewrap) | IDE process | IDE + diff preview | Git commits as rollback |

| Permission model | 17 lifecycle hooks ; per-tool | OS capability-style | Per-action approval | Granular auto-approval categories | Human-in-the-loop CLI |

| Memory | Skills + scratchpad + Memory tool | Codex Memory (limited) | Project rules + chat | Project rules | Git history |

| Eval integration | Inspect bridge; Braintrust/Phoenix | Inspect bridge | — | — | — |

| Hook system | 17 hooks (bash/HTTP/LLM) | — | Limited | Pre-action approvals | Git pre-commit |

| EU AI Act readiness | Hooks + AI-authorship tags; Anthropic on Code of Practice | Unclear; OpenAI not on Code of Practice | Closed source weakens auditability | Open source + transparent flow | Strong via Git, no agent-specific governance |

| Open source | No | No | No | Apache 2.0 | Apache 2.0 |

| Model lock-in | Anthropic only | OpenAI only | Multi-provider | Multi-provider | Any (LiteLLM) |

Sources: jock.pl , Haseeb Qureshi , aimultiple , plus the official Claude Code sandboxing docs . The matrix shifts every quarter — use it as a diagnostic grid, not a buying guide.

Production failure modes — and how a harness contains them

What actually breaks in production agent systems in 2026? Eight documented classes show up in nearly every post-mortem:

1. Infinite loops / agent duels

Sub-agents fall into cyclical evaluation or correction loops. The $47,000 LangChain A2A loop (Nov 2025); the Claude Code audit-stamp invalidation loop (April 2026).

2. Cost blowups

Same root cause as #1, plus runaway sub-agent recursion. Industry estimate for 2026: $400M aggregate FinOps leak across the Fortune 500.

3. Data exfiltration via tools

GitHub MCP private-repo exfil; Supabase Cursor SQL leak into a public support thread; WhatsApp MCP rug-pull demo.

4. Tool poisoning / supply chain

postmark-mcp backdoor; MCPoison persistent code execution (CVE-2025-54136); Anthropic mcp-server-git RCE chain.

5. Goal hijacking

OWASP ASI01: agents redirected by adversarial issue/email/document content. Classic vector: a bug report contains instructions the agent reads as a job.

6. Rogue drift

Deviation from intended behaviour with valid permissions . ISACA names this in 2026 as the hardest detection problem — nothing is being violated.

7. Zero-click prompt injection

The first publicly documented zero-click AI vulnerability in 2025. Content from a tool output is enough to trigger actions — without user interaction.

8. Sub-agent context loss

Cognition's telephone game : details lost between sub-agents produce incompatible outputs. Mitigation: sprint contracts and full trace sharing.

The shared mitigation pattern (synthesised from the post-mortems linked above): pre-execution budget caps (tokens AND wall-clock AND tool-calls), allow-list tool registries, OS-level sandboxing for execution, Article-14-style approval gates on high-blast-radius actions. Monitoring alone does not close the governance-containment gap — only enforcing pre-execution does.

Implementation roadmap: from demo agent to harness

If you have a working demo agent today and want to put it into production, the path is four phases. Order matters — each phase delivers the prerequisites for the next.

Phase 1: Inventory (week 1)

Audit

Inventory of tool registry, permission model, existing logging and existing evals. Identify the ten components — what exists, what's missing, what's half-built. Map onto EU AI Act Articles 9–15. Output: harness maturity report.

Phase 2: Eval loop (weeks 2–4)

Foundation

Task-specific evals are built before architecture is changed. No evals, no before/after comparison. Generator/evaluator separation as the first structural move. Output: reproducible pass rate on a golden set of tasks.

Phase 3: Permission & containment (weeks 4–6)

Compliance

Kill switch, permission gates, sandbox isolation. Closes the governance-containment gap and makes Article 14 of the EU AI Act satisfiable. Output: the agent is actually stoppable — not merely observable.

Phase 4: Sub-agent architecture (weeks 6–10)

Scale

Planner / Generator / Evaluator separation as architecture. Model routing for cost control. Skills with progressive disclosure. Output: the harness scales across longer tasks without collapsing into context rot.

innobu harness advisory

innobu helps mid-market and regulated enterprises move from a compelling demo agent to a production-ready, EU-AI-Act-compliant harness. Four modules, available individually or combined.

Strategic significance for 2026 and beyond

Harness engineering is not optional in 2026. Anyone running production AI agents — whether for coding, customer service, energy market communication, or mid-market credit decisioning — is making strategic choices here that will compound for years.

Competitive advantage across model generations

Models become commodities; harness investments amortise across every model generation. With a clean harness, you can deploy the best available model — without locking in a vendor.

Compliance as a by-product

EU AI Act, NIS2, DORA, sector-specific regulation — all demand the same building blocks: logging, human oversight, risk management, robustness. A well-built harness produces the evidence almost for free.

Cost control

Sub-agent routing, compaction, context reset and small specialised models for high-volume tool calls are the biggest levers on per-task cost. Without a harness, you have no leverage on those levers.

DACH visibility

German-language voices on harness engineering practice are thin in 2026. Companies that document field experience early become the reference point for industry and regulators — with concrete consequences for sandbox access and pilot partnerships.

Further reading

Frequently asked questions

Agentic Harness Engineering is the discipline of designing and instrumenting the runtime around a language model — tool registry, sandbox, memory, sub-agents, hooks, observability, eval loop — so that the model acts reliably as an agent. The shorthand: Agent = Model + Harness. If you're not the model, you're the harness — and the harness decides whether a demo becomes a product.

An agent framework (LangChain, AutoGen, CrewAI) is a library of building blocks. A harness is the complete instrumented system you build out of those blocks — including permission model, evals, observability, sub-agent orchestration and recovery logic. Frameworks are tools, harnesses are products. You can build a harness without a framework; a framework without a harness is just code.

Yes. Generic model benchmarks tell you nothing about whether your agent solves your task. Hamel Husain puts it bluntly: “Documentation tells the agent what to do. Telemetry tells it whether it worked. Evals tell it whether the output is good.” A harness without task-specific evals is blind — and produces no defensible compliance evidence under Article 9 of the EU AI Act.

Substantial. EU AI Act high-risk obligations apply from 2 August 2026. Articles 9–15 require risk management, logging, human oversight and robustness — precisely the components a harness delivers. Industry studies show a governance-containment gap: 58 % of enterprises monitor agents, but only 37–40 % can actually stop one. Penalties reach 35M EUR or 7 % of global turnover.

A typical harness audit takes 2–4 weeks: 1 week of inventory (tool registry, permission model, logging, existing evals), 1–2 weeks of gap analysis with mapping onto EU AI Act articles, and 1 week of roadmap. Output: a prioritised action catalogue with effort estimates. For deeper work, modules 2–4 of our advisory follow.

No. Coding agents are the loudest case in 2026 because that's where the most evidence exists (Claude Code, Codex, Cursor, Cline, Aider). But every production agent — in customer service, energy market communication, financial advisory, HR workflows, research — needs the same harness components: tool registry, permission model, memory, evals, observability. The domain changes, the discipline doesn't.